Routing errors and network disruption can also impact availability. Many things can impact server availability from software problems, operating system errors and hardware failures.

With a dedicated server model, the server MUST be online and available to the clients at all times, or the application simply will not work. The most obvious problem faced by all client-server applications is one of availability. With our definitions out of the way, let’s examine some challenges with Client-server networks.

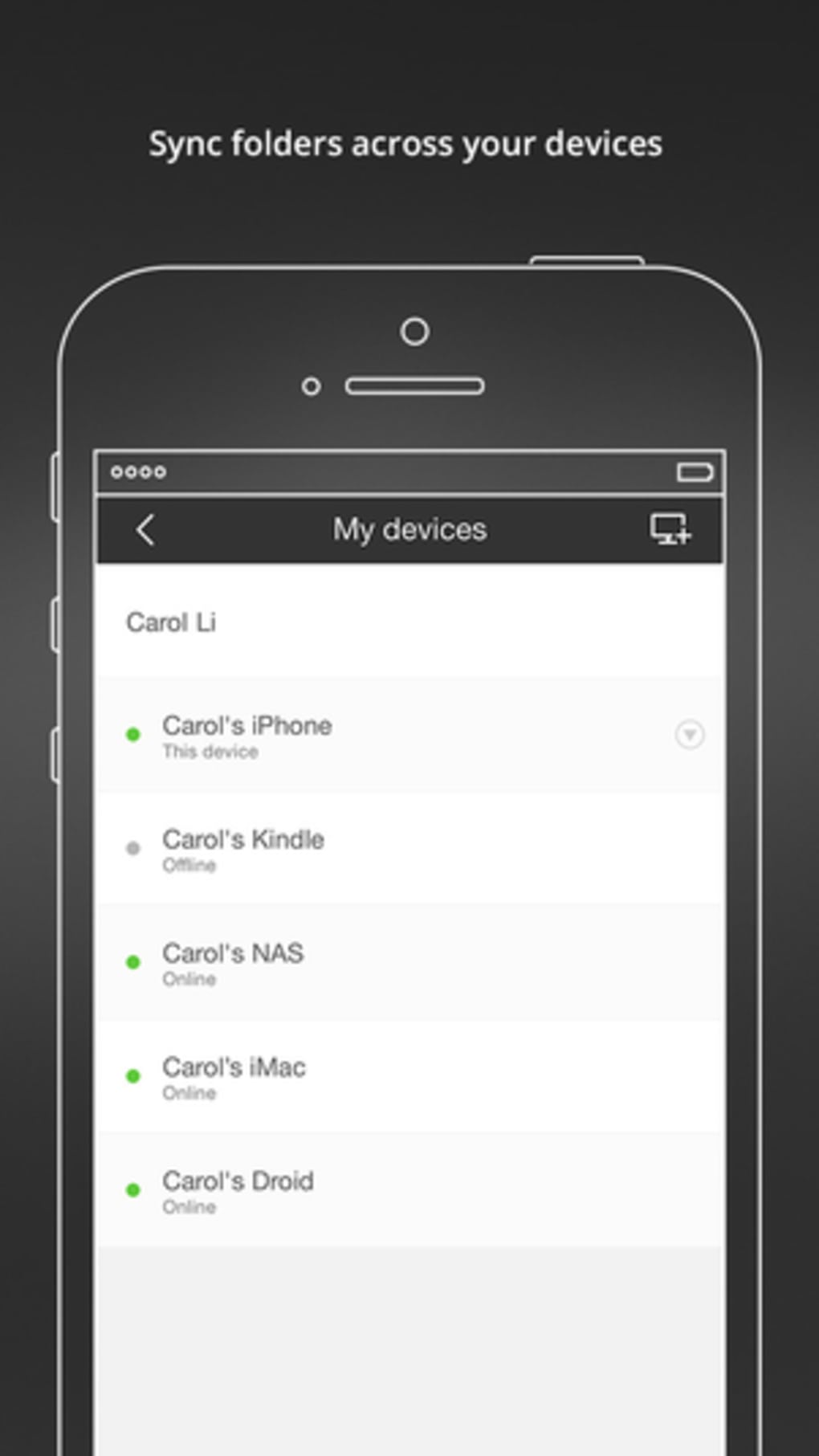

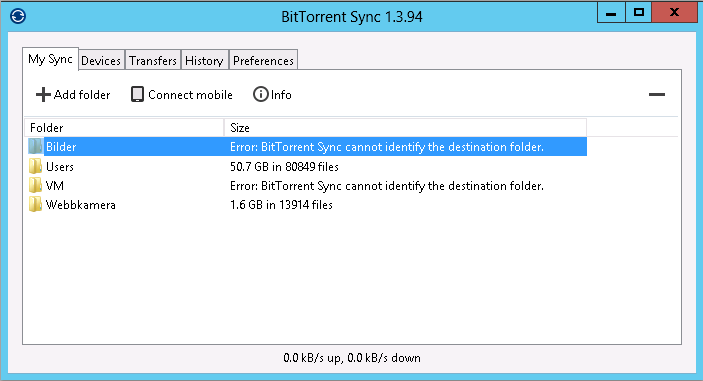

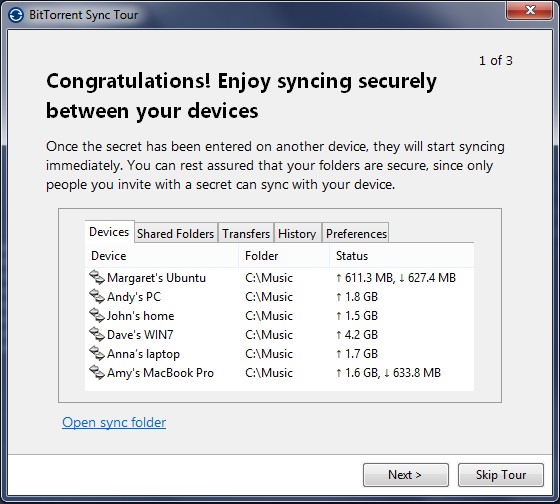

Today these peer-to-peer concepts continue to evolve inside the enterprise with P2P software like Resilio Sync (formerly bittorrent sync) and across new technology sectors such as blockchain, bitcoin and other cryptocurrency.

When the Internet became a content network with the advent of the web browser, the shift towards client-server was immediate as the primary use case on the internet became content consumption.īut with the advent of early file sharing networks based on peer-to-peer architectures such as napster (1999), gnutella, kazaa and later, bittorrent, interest in P2P file sharing and peer-to-peer architectures dramatically increased and were seen as unique in overcoming obvious limitations in client-server systems. The early Internet was designed as a peer to peer network where all computer systems were equally privileged and most interactions were bi-directional. Each computer is considered a node in the system and together these nodes form the P2P network. The peer-to-peer model differs in that all hosts are equally privileged and act as both suppliers and consumers of resources, such as network bandwidth and computer processing. All of these applications have specific server-side functionality that implements the protocol but the roles of supplier and consumer of resources are clearly divided. Some examples of widely used client-server applications are HTTP, FTP, rsync and Cloud Services. The server provides its clients with data, and can also receive data from clients. In this server model, the server needs to be online all the time with good connectivity. The client-server architecture designates one computer or host as a server and other PCs as clients. With this transformation, the client-server architecture became the most commonly used approach for data transfer with new terms like “webserver” cementing the idea of dedicated computer systems and a server model for this content. With the widespread adoption of the World Wide Web and HTTP in the mid-1990s, the Internet was transformed from an early peer-to-peer network into a content consumption network. To understand why, let’s first examine some definitions and history between the two models. For those of you unwilling to spend a few minutes reading through the article, I’ll let you in on a spoiler – peer-to-peer is always better than client-server. In this post, we compare the client-server architecture to peer-to-peer (P2P) networks and determine when the client-server architecture is better than P2P.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed